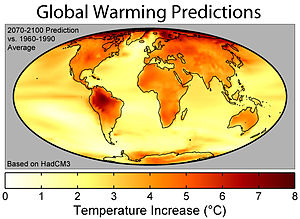

Image via Wikipedia

Image via Wikipedia

Very well written response by Michael Tobis to this question from Jon Pipitone "Do likely bugs in the software of climate models cast doubt on the

scientific consensus on human factors in

global warming?"

jon pipitone: Scientific software quality: what would it take to convince software engineers?: "

Michael Tobis said...regebro asks what looks like a reasonable question, but it's based on a fundamental misunderstanding of a question that is roughly equivalent to what the difference between weather and climate is. We have very little skill predicting one year out, even assuming no volcanoes and such. Most of the predictability of the detailed state atmosphere vanishes in three weeks or so.

But at a multidecadal time scale the problem changes character. Technically, we are no longer dealing with an initial value problem but with a boundary value problem, even though the underlying dynamics are the same.

We are not in that case looking for details in any specific year, but for the statistics over an extended period. In a mathematical sense that is an 'easier' problem; it is more constrained by energy balances than by nonlinear fluid dynamics. The messy stuff basically averages out and the residual is what we try to predict.

In fact, maybe I'll use that as a definition of climate. It's 'what you can say about the system after the messy unpredictable part gets averaged out'.

That's the basis for climate change modeling.

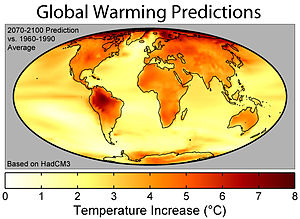

Image via Wikipedia

Image via Wikipedia

As for Jon's question, the models are wretched pieces of engineering. No commercial shop would release anything nearly as balky, hard to deploy, or prone to failure. It makes open source look good. And that has almost no bearing on whether they are suitable for the purpose.

In fact, sometimes models are used well and sometimes they are used badly. This in turn is a scientific, not a software question.

All this said, I desperately wish the software were better, and I think we could address many more scientifically meaningful problems much more effectively if it were.

Finally, if you think the question is 'global warming, yes or no' the large models in question are much less relevant than many people would have you believe. The answer to that question is yes, to the extent of about 3 degrees per CO2 doubling.

The idea that such a conclusion comes from complex models is wrong."

I will forgive Mr. Tobis for the dig against open source software ;) I maintain the difference in quality between the very best and the very worst engineered open source software projects makes it very difficult to say anything sensible about the totality.

Also, metrics in software development cannot predict the quality of output of any particular group working on a particular problem - too many confounding issues. For example, the developer with the highest bug count tends to be the best developer on the team - nobody else is trusted to tackle the hardest coding issues.

Image via Wikipedia

Image via Wikipedia

Another issue is the issue of making scientific computations reproducible. Even more basic than if a particular computation is correct is making sure that computation can be reproduced by another group.

Making scientific computations reproducible

Computing in Science and Engineering archive

Volume 2 , Issue 6 (November 2000)

Pages: 61 - 67

ISSN:1521-9615

Authors

Matthias Schwab

Martin Karrenbach

Jon Claerbout

Image via Wikipedia

Image via Wikipedia Image via Wikipedia

Image via Wikipedia Image via Wikipedia

Image via Wikipedia![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=55da6956-2f71-4cfb-bd6b-63cc307e099f)

No comments:

Post a Comment