Took me a while to find this, so let me blog it now, for prosperity:

Image via Wikipedia

Image via Wikipedia

How to override comparison operators in Python

Jp Calderone goes into much more detail than just how to write proper "__eq__" and "__ne__" methods for your own

Python classes, but it is surprising how well hidden the details for correctly implementing "__eq__" and "__ne__" are.

I believe the issue is less critical in Python3, because it does the correct thing when only "__eq__" is implemented.

Here is the sample code:

class A(object):

def __init__(self, foo):

self.foo = foo

def __eq__(self, other):

if isinstance(other, A):

return self.foo == other.foo

return NotImplemented

def __ne__(self, other):

result = self.__eq__(other)

if result is NotImplemented:

return result

return not result

If you want an

immutable object that can be used as a dictionary key, you will want to implement "__hash__", along with "__eq__" and "__ne__".

If you are implementing inequality comparisons -

Be Careful -

supply the full complement of inequality comparisons and take care when using "NotImplemented". The default implementations of "less-than __lt__" "less-than-or-equals __le__" "greater-than __gt__" "greater-than-or-equals __ge__" aren’t very useful - they compare by address using id().

This default inequality comparison can introduce intermittent bugs in your comparison code. If there is no meaningful comparison between different types or classes, raise a TypeError, so there is no risk of falling back on the terrible default inequality comparison implementation. This problem will be fixed in Python3.

The fastest and most complete solution is this code from Raymond Hettinger -

Python Cookbook recipe 576685: Total ordering class decorator.

def total_ordering(cls):

'Class decorator that fills-in missing ordering methods'

convert = {

'__lt__': [('__gt__', lambda self, other: other < self),

('__le__', lambda self, other: not other < self),

('__ge__', lambda self, other: not self < other)],

'__le__': [('__ge__', lambda self, other: other <= self),

('__lt__', lambda self, other: not other <= self),

('__gt__', lambda self, other: not self <= other)],

'__gt__': [('__lt__', lambda self, other: other > self),

('__ge__', lambda self, other: not other > self),

('__le__', lambda self, other: not self > other)],

'__ge__': [('__le__', lambda self, other: other >= self),

('__gt__', lambda self, other: not other >= self),

('__lt__', lambda self, other: not self >= other)]

}

roots = set(dir(cls)) & set(convert)

assert roots, 'must define at least one ordering operation: < > <= >='

root = max(roots) # prefer __lt __ to __le__ to __gt__ to __ge__

for opname, opfunc in convert[root]:

if opname not in roots:

opfunc.__name__ = opname

opfunc.__doc__ = getattr(int, opname).__doc__

setattr(cls, opname, opfunc)

return cls

For a lower tech solution, consider using this

Mixin class for inequality comparison special methods [from Fuzzyman:

http://www.voidspace.org.uk/python/articles/comparison.shtml]

class RichComparisonMixin(object):

def __eq__(self, other):

raise NotImplementedError("Equality not implemented")

def __lt__(self, other):

raise NotImplementedError("Less than not implemented")

def __ne__(self, other):

return not self.__eq__(other)

def __gt__(self, other):

return not (self.__lt__(other) or self.__eq__(other))

def __le__(self, other):

return self.__eq__(other) or self.__lt__(other)

def __ge__(self, other):

return not self.__lt__(other)

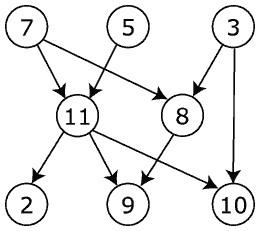

Image via Wikipedia

Image via Wikipedia

[Aside & Plug]

Let me take this opportunity to give a plug to the book

IronPython in Action, by Michael Foord (

Fuzzyman) and Christian Muirhead. The publisher,

Manning, has a great service to Python Programmers on the book's website:

Image by Michael Foord via Flickr

Image by Michael Foord via Flickr

Python Magic Methods

I was a little disappointed (and surprised) that this great Python magic methods reference didn't give more tips about "__eq__" and "__ne__". But, otherwise, this is all great material and this is all new material, not just a re-hash of the original on-line Python docs. The best summary I have seen; even better than

Alex Martelli's

Python in a Nutshell.

Matt, thanks for taking the time to put this together. The optimizations applied by promise are certainly not in the same league as something like psyco - they have to be quite well targeted to have any measurable effect.

Matt, thanks for taking the time to put this together. The optimizations applied by promise are certainly not in the same league as something like psyco - they have to be quite well targeted to have any measurable effect. ![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=1a43c7e7-721a-4374-86a7-0cb97660a621)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=55427553-5c79-4fbc-a860-09f48f3cee1f)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=9b073f8a-08ed-46d9-aa6d-5910f8db73e5)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=fe2263e0-0959-46b9-bd95-3773e1dde26a)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=5f4159ce-13d7-448c-b6a1-077bd9404e8e)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=c0e611e6-332b-4c0f-844b-a3c6b061293a)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=a409e728-fb93-4519-b042-f4b1fb67fafa)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=fe190cfc-4f1b-4922-97e7-b6bd5a10e3eb)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=d78f5b3e-d7ba-4039-af20-f76824602f1c)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=a2ae2ff0-4041-44f8-81a3-dbddcbe9c01e)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=86b19a7c-bd7e-475f-9b3b-0503b50da8bf)